|

9/6/2023 0 Comments Shannon entropy

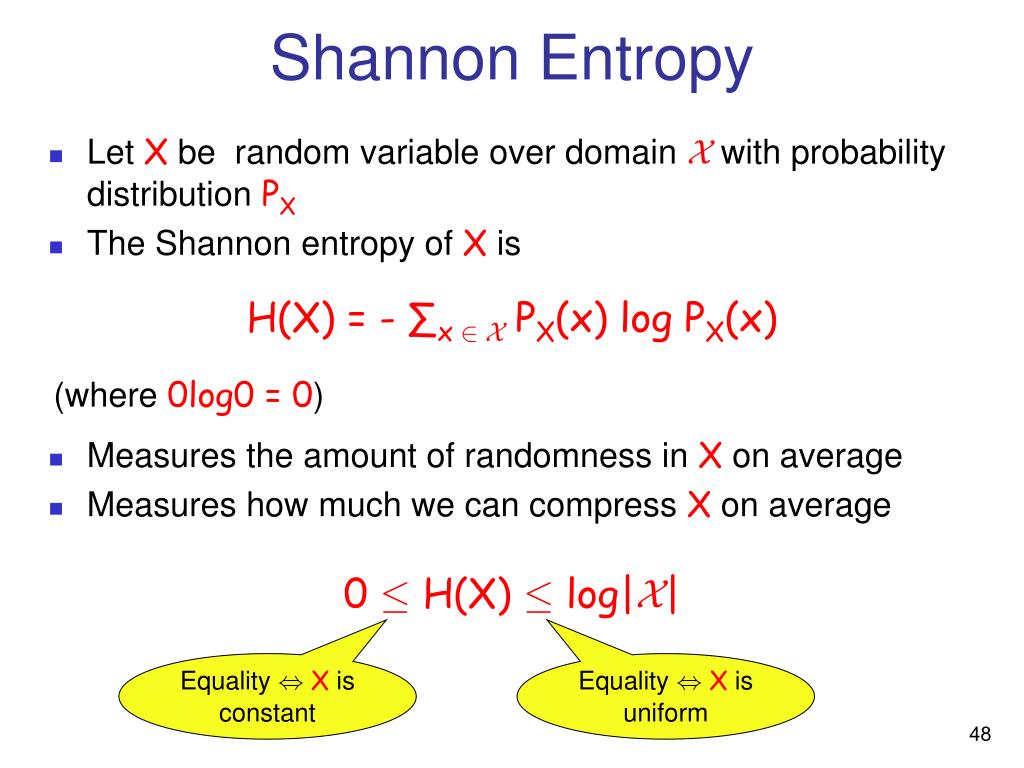

It implies the possibility of using molecular representations to enhance the accuracy as well as the overall prediction performance of neural network models. It was demonstrated that depending on the type of SMILES representation, a neural network based on models such as RNNs (Recurrent Neural Networks), could learn and perform more accurately. Additionally, SMILES representations have several different variants like canonical, isomeric, randomized, DeepSMILES etc. Different grammatical or representational aspects were encoded by these strings and therefore, could serve as a source rich in molecular and structural information content for potential use in machine learning applications. In the last few decades, considerable development took place in representing molecules in various string formats such as SMILES (simplified molecular-input line-entry system), SMARTS (SMILES arbitrary target specification), InChiKey (International Chemical Identifier Key), SYBYL line notation (SLN), SMIRKS (SMIles ReaKtion Specification), SELFIES (Self-referencing embedded strings) etc. It not only provides structural information but grammatically describes motifs, subtle arrangements, bonds and atomic proximities. One of the widely available sources of descriptor design is the representation of the molecule itself. Therefore, a combination of novel descriptors along with deep ensemble learning might be able to address the shortcomings more effectively. Deep heterogeneous ensemble learning, on the other hand, could partially address predictive performance and generalizability issues at the expense of interpretability. However, they suffer from target specificity and therefore, generalizability. Developing novel descriptors could partially address such limitations. The characteristic feature of the molecules or descriptor-based machine learning to predict physicochemical properties faces trade-offs between performance accuracy, interpretability of the results and generalizability to different datasets. Prediction of the physicochemical properties of molecules is one of the most widely used applications in machine learning and is central to the field of chemistry and material science. This simple approach of coupling the Shannon entropy framework to other standard descriptors and/or using it in ensemble models could find applications in boosting the performance of molecular property predictions in chemistry and material science. Additionally, we found that either a hybrid descriptor set containing the Shannon entropy-based descriptors or an optimized, ensemble architecture of multilayer perceptrons and graph neural networks using the Shannon entropies was synergistic to improve the prediction accuracy. The proposed descriptor was competitive in performance with standard descriptors such as Morgan fingerprints and SHED in regression models. Analogous to partial pressures and total pressure of gases in a mixture, we used atom-wise fractional Shannon entropy in combination with total Shannon entropy from respective tokens of the string representation to model the molecule efficiently. Using various public databases of molecules, we showed that the accuracy of the prediction of machine learning models could be significantly enhanced simply by using Shannon entropy-based descriptors evaluated directly from SMILES.

We explored the accuracy and generalizability issues using a framework of Shannon entropies, based on SMILES, SMARTS and/or InChiKey strings of respective molecules.

Additionally, an increase in the prediction accuracy of the model is not always feasible from the standpoint of targeted descriptor usage. This in turn requires the identification and development of target or problem-specific descriptors. Traditionally, property-specific molecular descriptors are used in machine learning models. Accurate prediction of molecular properties is essential in the screening and development of drug molecules and other functional materials.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed